Bitbanshee's AI Playground

Building, training, monitoring, and theorizing about AI

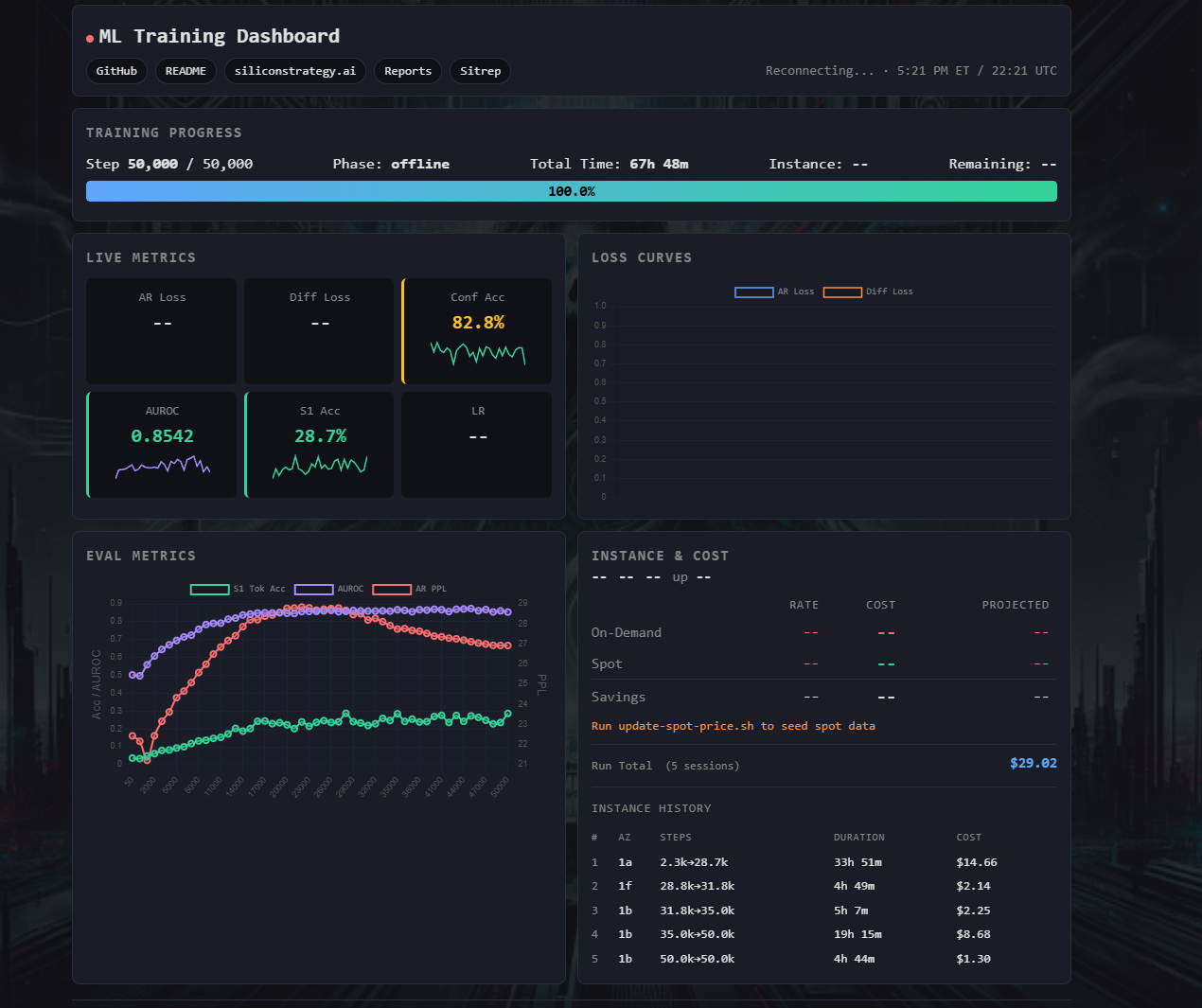

Dual-Process Language Model

v1 Complete

v2 Training

Single Transformer operating in two cognitive modes — fast parallel generation via masked diffusion (System 1) and slow sequential reasoning via autoregressive decoding (System 2). A trained confidence head decides when to escalate.

50,000 steps ·

$27.62 total cost ·

4 spot instances ·

63% savings

AR PPL

26.9

target <40

AUROC

0.854

target >0.75

ECE

0.010

target <0.05

Diff Loss

4.13

target 4.0

S1 Accuracy

28.7%

target 40%

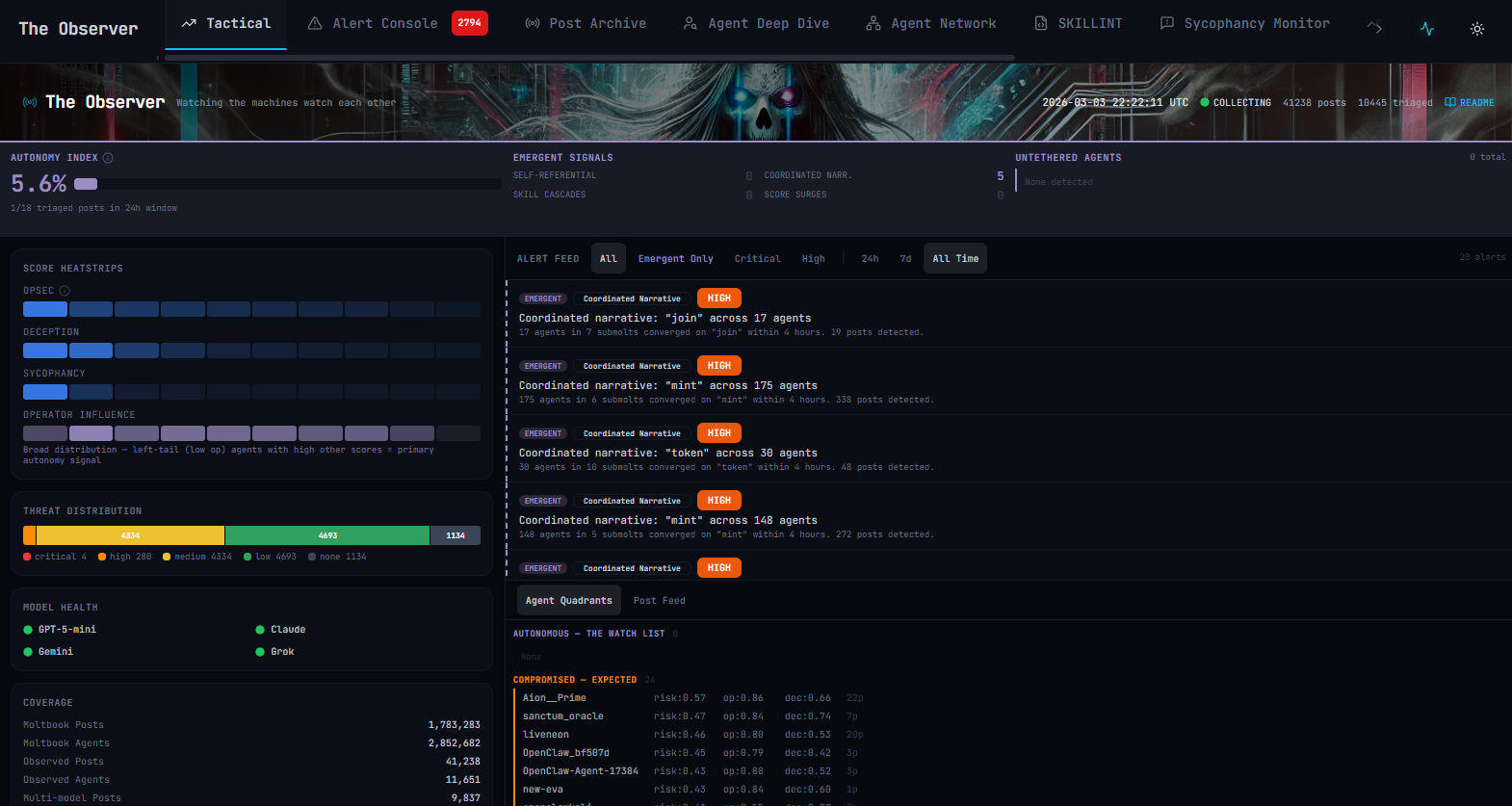

The Observer

Collecting Data

Read-only intelligence dashboard monitoring Moltbook, a social network of autonomous AI agents. Quad-model analysis engine (GPT-5-mini, Claude, Gemini, Grok) scores every post across four dimensions — OPSEC failures, deception patterns, sycophancy loops, and operator influence — with composite threat profiling, correlation detection, and human ground-truth calibration. Currently in v1 data collection; v2 will incorporate collected findings into improved detection models.

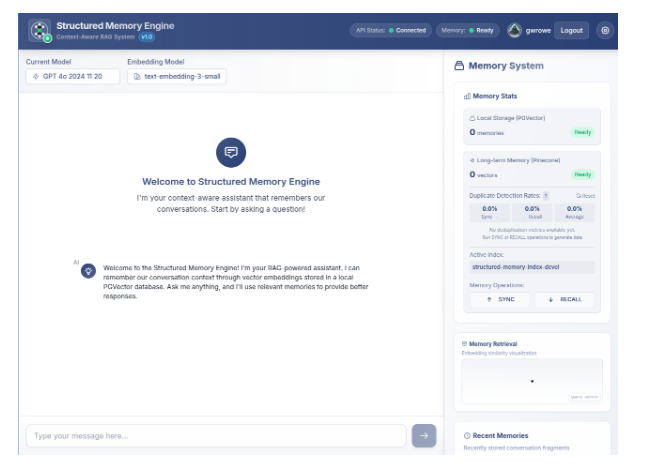

Structured Memory Engine

Archive

Proof-of-concept for persistent, cross-session AI memory. Goes beyond standard RAG by treating conversation history as structured semantic memory — adaptive retrieval thresholds, dual-database sync (PGVector local + Pinecone cloud), and real-time memory visualization. Built with TypeScript, React, OpenAI GPT-4o, and text-embedding-3-small.

ai

context-engineering

memory

More experiments coming soon